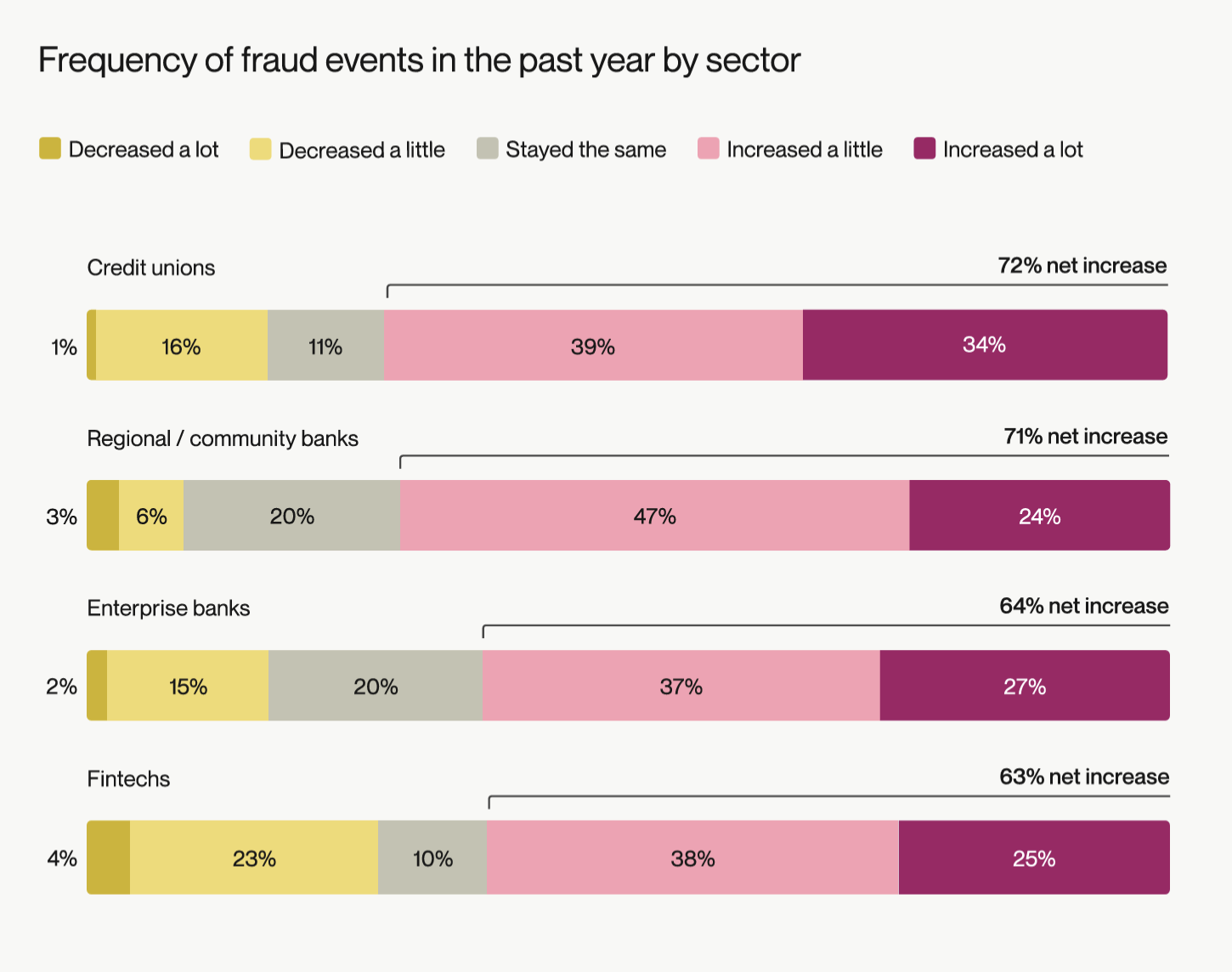

In financial services, fraud is becoming more frequent and more sophisticated. Alloy’s 2026 State of Fraud Report shows that 67% of financial organizations say fraud events are increasing, and 91% have noticed more financial crimes involving artificial intelligence (AI) technologies.

This shift is especially pronounced for credit unions and regional/community banks, which, at 72% and 71% respectively, reported the largest increases in fraud events.

Source: Alloy’s 2026 Fraud Report

As fraudsters use AI technology to scale synthetic identities, automate scams, and coordinate attacks across institutions, financial organizations are applying their own AI strategies to surface suspicious patterns and anomalies in real time.

But AI is not a silver bullet for fraud detection. Operationalizing AI requires strengthening the systems around it: broadening data inputs, applying intelligent orchestration, and aligning friction to risk. It also involves incorporating AI not as a superficial layer, but within the decisioning system itself. Once the fundamentals are in place, AI can significantly increase fraud detection efficiency, reducing manual reviews and freeing up valuable time for agents.

Let’s break down the four steps to successfully operationalizing AI in fraud prevention and delivering a seamless digital account opening experience while protecting your institution.

AI-powered identity and fraud prevention platforms run on data and machine learning. In machine learning, the reliability of your models depends on the breadth and quality of the signals feeding them. If inputs are narrow or siloed, AI will scale incomplete decisioning delivering minimal to no impact.

That’s why operationalizing AI starts with expanding data sources from multiple vendors across identity verification, device and IP intelligence, behavioral activity, velocity patterns, application attributes, and portfolio-level trends. Each unique signal adds context.

Broader data inputs allow institutions to validate legitimacy through consistency across multiple dimensions rather than relying on just one or two data points from a single data vendor.

In fraud prevention, when AI operates with too few signals, uncertainty rises, and manual reviews follow. Instead of improving efficiency, the system simply scales more alerts and more friction without delivering relevant insights to take action. When institutions evaluate a broader range of data sources in context, decision confidence increases. This is the first condition that allows AI to drive more precise approvals instead of more unnecessary escalations.

Data orchestration is what turns raw data signals into meaningful context. It enables financial institutions to adopt a multi-vendor approach that connects identity, device, behavioral, and application data into a unified view of each applicant at the moment a decision is made during account origination.

Without orchestration, more data does not improve outcomes. The data may exist, but it’s not being activated in a coordinated way. Information remains fragmented across systems, and teams rely on manual work to reconcile mismatched fields or incomplete records.

When data is unified, standardized, and delivered without delay, AI can operate within a stable operational foundation. Orchestration ensures that intelligence is applied consistently across onboarding, ongoing activity monitoring, and investigation workflows rather than existing as disconnected outputs.

Once data is unified through orchestration, the next question is how that intelligence should be applied within the decision flow. A waterfall approach answers that question.

A waterfall model sequences controls based on risk rather than applying every check to every applicant at once. Signals are evaluated in stages. Low-risk applicants move through streamlined verification, while higher-risk indicators trigger additional scrutiny. When clear signs of fraud appear, decisive action follows.

This is what’s referred to as a friction-right strategy. The objective is not to remove friction entirely, but to align it to demonstrated risk. Applying the same level of verification to every applicant creates unnecessary delays and strains operational capacity. Applying it selectively preserves both protection and digital account opening user experience.

A structured waterfall also improves operational efficiency. Institutions can delay higher-cost or higher-friction checks until risk thresholds are met, reducing unnecessary vendor spend and manual review volume. Legitimate applicants move forward faster, and fraud teams focus their attention where it has the greatest impact.

This is one of the key factors that allows AI to scale responsibly. Instead of applying intelligence uniformly and generating blanket friction, AI operates within defined frameworks that reinforce precision.

With broad data inputs, orchestration, and risk-based sequencing in place, AI can finally operate as intended. The final step is embedding that intelligence directly into decision workflows so it drives action, not just analysis.

This is where the concept of actionable AI comes into focus.

Actionable AI is an approach to leveraging AI and machine learning in fraud prevention that connects risk detection directly to execution. Rather than stopping at risk scores or anomaly flags, it translates model outputs into structured context and policy-aligned next steps within the systems teams already use.

This distinction matters because detection alone does not create operational value. A model can surface suspicious activity, but if investigators still need to gather context manually or determine the appropriate response on their own, efficiency gains stall. Actionable AI closes that gap by delivering intelligence directly into fraud and compliance workflows, enabling decisions to be made immediately and consistently.

Alloy’s Fraud Attack Radar brings this model to the portfolio level by identifying coordinated activity across multiple applications. It surfaces shared infrastructure, velocity anomalies, and emerging attack patterns that may not be visible in isolated reviews. Because that intelligence feeds directly back into onboarding decision flows, institutions can contain campaigns early rather than reacting after losses accumulate.

When AI operates within the workflow instead of alongside it, it becomes part of the operational engine. That is what it means to operationalize AI. Intelligence drives real-time response, investigative lift decreases, and institutions can scale growth without scaling friction.

Operationalizing AI makes fraud prevention more precise, scalable, and aligned to growth. But that precision does not come from models alone. It depends on the systems that support them, including broad data inputs, coordinated orchestration, risk-aligned sequencing, and intelligence embedded directly into decision workflows.

When AI operates within this structured foundation, fraud detection becomes more efficient and more reliable. Manual review volumes decline because decisions are supported by complete context. Approval confidence increases because signals are evaluated holistically. Onboarding moves faster while maintaining strong controls.

This is already happening through the MANTL–Alloy partnership. Together, the integration has processed more than 2 million deposit applications across 150+ shared financial institutions, achieving an average automated decision rate exceeding 80%.

Operationalizing AI closes the gap between signal and action inside the digital account opening flow. Financial institutions that build this foundation position themselves to protect trust, improve efficiency, and scale growth confidently in an increasingly complex fraud environment.

Alloy’s actionable AI suite enables banks and credit unions to improve efficiency while maintaining strong fraud controls.